Project Basics:

Introduction:

I am always fascinated by devices like Kinect, MyoArmband, Leap Motion or Nuerosky and all the other such devices. The beauty of these devices is that one does not need to worry about making proper circuits for signal acquisition and filteration and can more focus on developing different projects with the different types of data these devices provides with their relevent APIs or support for other softwares like MATLAB, LabVIEW, or even python.

Objective:

The objective of this project was to develop a system where one can wear Myo Armband that outputs EMG signals of arm of its wearer and Neurosky, that outputs the EEG signals of its wearer's brain and can control a prosthetic hand (InMoov Hand, Thanks to Gael's open source 3D printable designs). You can read about the these devices and about InMoov on their relevent websites.

Work on Myo Armband:

MyoArmband outputs five different unique gesture of wearer's hands. These gesture are pre-defined and are very well recognized by design once the wearer of band makes them. These include Fist, Finger Tab, Rest-hand, Wave-in/out of palm and Finger spreads. Though these gesture can be mixed up with a controller to make some really great home automation projects, they are not worth for a prosthetic hand movements because I want to make this project as much realistic for people with a hand disability as possible. So I opted to acquire for the raw EMG data of armband wearer. To my luck, an online app and a Visual Studio-based open source project was already developed to capture the raw EMG data of Myo. However, these data is stored into the Microsoft Excel files once the session of the app (Data-Capture-App) is closed. This condition was not suitable for my project because I needed real-time EMG data and this app would provide my raw EMG data once I close it. Because the app code was open source, I started to make changes in it so that it could provide write real time eigth channel data into a text file. I opted for text files (format of text files is .txt) as they are easy to read in LabVIEW. Before I continue, I would like to mention that I do not have expertise (not even slightly in Visual Studio, that is why I did not make a GUI in VS and visualize real time data in VS app code).

So once the data was stored in a text file and it was real time, I made a LabVIEW VI and used text file read functions to read that same file at the same time the data was written in it from the Data Capture App. I know what you may be thinking, how is it possible to use two different softwares and both are approaching the same text file at the same time exactly. Actually it is possible. If I were to write data from LabVIEW VI and Visual Studio in the same text file at the same moment then it will not be possible but I was writing data from VS app and reading data from LabVIEW VI at the same time and it is actually possible (please check the text file documentation on Microsoft website, Hacky way? Yes, I know :) ). Now I had the eigth channel real time EMG data of Myo Armband, continously changing over a range of 500 to -500. These value represents the scaled mucles electrical signals. Next question might be, which muscles activity? As there are many different muscles on hand only, you can not for sure tell which muscle activity is which, because some muscle are antagonist too. Myo forums cleared this confusion for me. As my goal was to make custom gesture from the data I was acquiring, instead of finding each muscle activity, I skipped the part and focussed on gestures recognition. There are some points to note, EMG data from Myo outputs at exactly 200 Hz that means one can not change this frequency so really I had a lot of data to play with.

I started gesture recognition with very simple steps. I tried to look for maximum magnitudes of each channel achieved once I make a custom gesture. But this was probably the most 'kiddish' appproach towards gesture recognition from the data I got. Reason? As the data was fluctuating at the rate of 200 Hz with amplitudes as high as 500 and as low as -500. I could not find suitable if-else conditions for it. :-) So I looks for the area under the curve after I shifted each data point from negative to positive axis (this was done to cancel the cancellation effect). But this approach was not probably too kiddish too.

After many searches on Google, I found a toolkit named 'Gesture Recognition Toolkit'. This toolkit gets raw data from any sensor and this data can be used with many supervised and unsupervised algorithm. I have a little to no knowledge of machine learning (I took an online course on MIT website but I could not grab most of the stuff). So I knew that custom gesture recognition is a 'classification problem of supervised learning'. Supervised learning because I have the data to train the 'pipeline'. I used the 'Adabost Algorithm' with 'Class label filters' for smooth out the gesture.

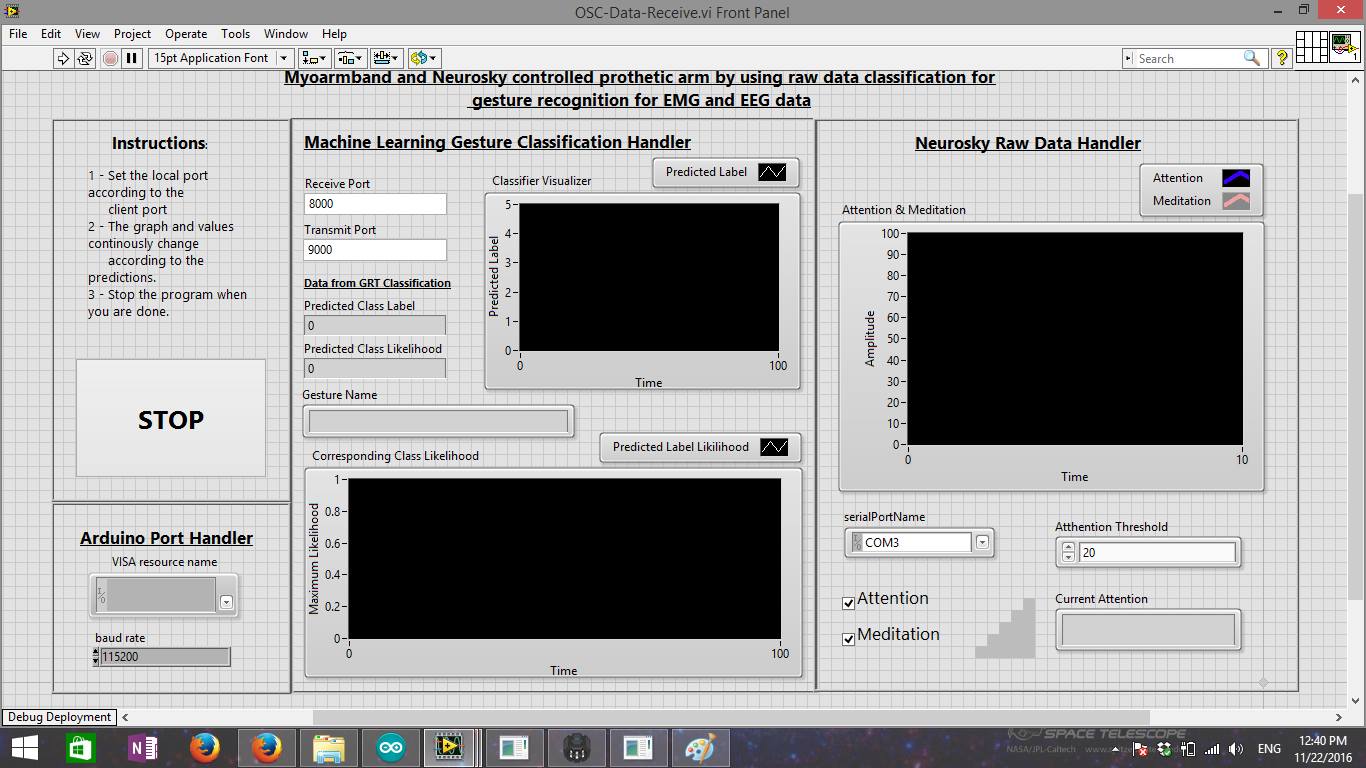

How I sent the data from LabVIEW to Gesture Recognition Toolkit (GRT), it turns out that GRT has UDP-OSC (User Datagram Protocol - Open Sound Control). I can not explain these protocols here (as I read only task-related knowledge from Google and used it). The best way I got it is like this, consider UDP is a 'voice' and OSC is a 'language'. You need a voice to speak a language. In computing terms, EMG data was formatted in a specfic way (language) and sent to GRT through UDP (voice). The I trained the pipeline, recognized different gestures with null rejection of 0.500.

After GRT recognizes the custom gesture and it takes a little time to do so (around 1 second due to class label filters' size), I sent this gesture information back to the LabVIEW code through UDP using OSC formatting.

After this was done, I printed out the 3D model of InMoov hand (I don't have a 3D printer, and in Karachi there are only two places where you can print a 3D model). I assembled it and I had many issues which I faced while assemling it due to bad 3D printing and lack of my experience in part assembly. Tieing the strings of the hand to get better tension was probably the hardest part of assembly (thanks to my project partner, Danial, he did it after a lot of effort on it). Once the arm was assembled and properly check with Arduino and its serial monitor input. I modified the same LabVIEW VI that was receiving the recognized gesture from GRT. To communicate LabVIEW with Arduino, I used Virtual Instrument Serial Architecture (VISA), (N.B: If you look into my Rhino XR-4 project, I extensively used LabVIEW - Arduino communication this is why it was preferred). Then specific motors were rotated as par the requirement of pre-defined gestures and it was all working.

N.B: I also used Neurosky Mindwave to get the attention signal and used a threshold and current attention signal before a specific gesture is recognized. This was done to nulify the unintentional recognition of a gesture or if the user actually does not want the arm move. That means to move the arm with custom gesture, user needs to be attentive at the task of doing it.

Supports:

Project Idea: Danial Sikandar

Project Partner: Danial Sikandar

Project Supervisor: N/A (We did all that without any guidance and out of curiosity)

Project Inspiration: The inspiration came from on-going project from John Hopking's University Project, but they used the regression analysis (my best guess) to move the prosthetic hand instead of opting for classification problem of machine learning.

Videos and Images:

There were many videos that we shoot while I and Danial were working on this project. I will soon upload all the videos as I get some time.

The below image is the final GUI.